Designing AI mentors students actually use: A mixed-methods investigation

Project Overview

As a research analyst at the Penn Center for Learning Analytics, I led a mixed-methods study investigating how students interact with an AI mentor agent in a computer-based science program—and whether those interactions actually improve learning. I coordinated a cross-functional 5-person team spanning Human-Computer Interaction, Sociology, and Education. We combined system interaction logs, learning assessments, and 358 real-time interviews conducted with students as they used the program. These complementary data sources allowed us to capture both what students did and why they did it.

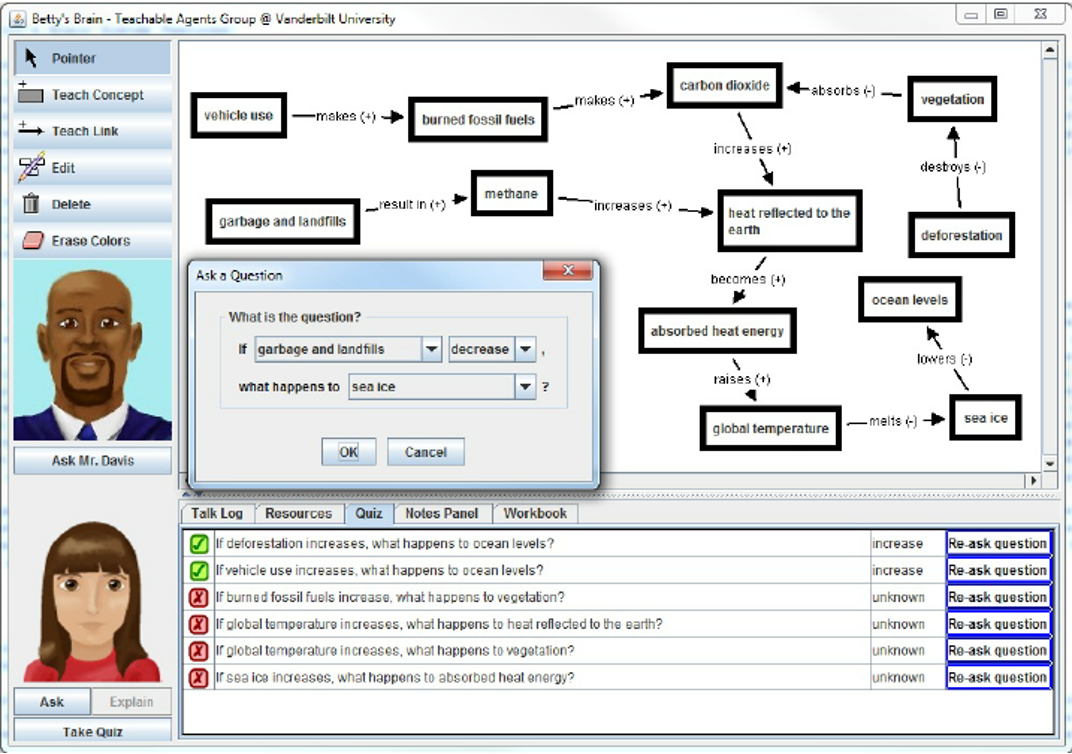

The study focused on Betty's Brain, a computer-based science education program where students learn by teaching. Students build visual causal maps to teach a virtual character named Betty. When students get stuck, an AI mentor agent called "Mr. Davis" provides two types of help:

Student-initiated help: Students can ask Mr. Davis questions

System-initiated help: Mr. Davis proactively offers suggestions based on student activity

Research Objectives

We designed the study with three primary research objectives:

Understand how students actually use the mentor agent

Determine whether help behaviors correlate with learning outcomes

Uncover why students engage with (or ignore) the mentor agent’s assistance

Research Approach

The participants were 92 sixth-grade students at a Tennessee middle school. They used Betty's Brain for four days (~50 minutes/day), engaging with the climate change module.

We combined multiple data sources to capture both what students did and why they did it:

1. Computer Interaction Logs

Timestamped record of every student action over four days

Help behavior record: The logs enabled us to track who asked for help (and how many times), what Mr. Davis suggested, and whether students followed his instructions

Automated emotion detection: Machine learning algorithms predicted students' emotional states (boredom, confusion, engagement, delight, frustration) every 20 seconds based on their interaction patterns

2. In-Situ Interviews (n=358)

The research team conducted brief interviews with students while they used the program

Quantitative coding: We tallied positive and negative statements about Mr. Davis

Qualitative interpretation: We used affinity diagramming to identify themes in student perspectives

3. Learning and Anxiety Measures

Pre- and post-tests measuring climate change knowledge

Surveys assessing science anxiety

This multi-method approach enabled us to see what students did (interaction logs), how they felt (emotion detection), what they thought (interviews), and what they learned (assessments)—revealing insights that no single method could capture alone.

Key Findings

1. Students Overwhelmingly Ignore Unsolicited Help. Students followed Mr. Davis's unsolicited suggestions only 6% of the time. Nearly half (42%) never accepted a single suggestion, and even the most cooperative student only followed 50% of Mr. Davis’s suggestions.

2. Help Acceptance and Help Seeking Are Distinct Behaviors. Students who frequently ask for help aren't the same ones who accept unsolicited advice. These represent two fundamentally different interaction patterns.

3. Only Help Acceptance Correlates with Learning. Students who accepted Mr. Davis's unsolicited suggestions showed improved post-test performance. Help-seeking showed no correlation with learning outcomes—simply asking for help didn't translate to better understanding.

4. High Engagement ≠ Positive Experience. Perhaps counterintuitively, students who interacted most with Mr. Davis—both those who accepted help and those who frequently requested it—made the most negative comments about him. Our analysis of the qualitative data suggests that students wanted direct answers. When Mr. Davis's responses left them uncertain or frustrated, they blamed him for not being helpful enough.

Insights for EdTech Designers

1. Distinguish Between Different Types of Help Behaviors. Help acceptance and help-seeking are fundamentally different behaviors that predict different learning outcomes. EdTech designers should track these patterns separately and design accordingly.

2. Students Ignore Unsolicited Advice. Students followed unsolicited suggestions only 6% of the time, with nearly half never accepting a single one. Designers creating AI tutors should reconsider heavy reliance on system-initiated assistance.

3. Bridge the Gap Between Pedagogical Design and Student Expectations. Students wanted direct answers; the AI tutor encouraged independent problem-solving, and this disconnect appears to have caused student frustration. Proactive coaching—whether from a classroom teacher or embedded within the computer-based program itself—could help students understand what kind of help to expect and how to use it effectively, making them better at leveraging the resources available to them.